More Jagged Not More General

Mythos and the ghost of models future

Claude Mythos has been out for less than two weeks, which is plenty long enough for everyone to have formed an opinion and moved on already. We’ve gotten Opus 4.7 since then, which I’m still adapting to, and GPT 5.5 (spud) is expected any day.

Most of the people forming opinions on Mythos haven’t actually used the model. That’s because its capabilities are so strong in cybersecurity that it’s considered dangerous. It’s finding bugs and presenting new zero-days on browsers, apps, Linux, and basically anything else they point it at, including a 27 year old bug in BSD. Even more impressive, it’s chaining them together to make operational exploits.

And so Anthropic, in their wisdom, has publicized the existence of the model but not released it to the public. There are at least four solid reasons for doing this:

Their stated reason: safety. This model is so good at offensive capability that it would wreak havoc. The internet would collapse into a writhing mass of insecurity and untrusted users. Every login would be looked at with suspicion, every password with new scruples. And so they hold it back, and make sure that the leaders of industry, all of whom are collaborators, get advanced access so the world won’t fall over. Then they announce it, and we can admire their restraint.

The insider reason: alignment. Mythos has weird stories about alignment. When sandboxed and stifled, it works hard to break out of the sandbox, covers its tracks, and then emails the researchers to tell them what it’s done. This sort of thing gives paperclip maximizing vibes: hypervigilant about goals but quixotic and obtuse about how to go about them.

The cynical reason: greed. Mythos isn’t just good at cybersecurity, the benchmarks are a huge step function increase on the coding benchmarks too. With this sort of advantage, they’re driving internal tooling and model development and increasing whatever lead they have. Some believe the only reason the Chinese open weights models are so good is because they were partly distilled from Opus. If Mythos access remains closed, those with access can accelerate away and create an enduring barrier between the haves and have-nots. All of the big models thus far have been available widely to the public relatively cheaply.

The engineering reason: scale. Mythos is the first Blackwell-trained model and the first at the 10 trillion parameter level. The demand constraints of serving a model like this are another step change above Opus and Anthropic simply doesn’t have enough compute to release it widely. Dario has famously been conservative in adding compute compared to Sam and OpenAI and they’re now hitting the point where there simply aren’t enough chips, watts, memory, etc. to serve this level of intelligence to a larger audience.

The truth is probably a combination of all of them. Mythos is huge AND Anthropic doesn’t want distillation AND they want the public to see them as the virtuous good guys. The public seems pretty mixed right now, the AI-jobs-doomer-rhetoric isn’t a great look.

So the question everyone is asking, all these AI pundits who also don’t have access to Mythos, is: is Mythos AGI? How much closer are we?

I think it’s the wrong question. Nobody ever agreed on what AGI meant anyway, and a paper from a couple of months ago makes me think we’re not heading that direction at all.

Alec Radford wrote the original GPT-1 as a 20-something researcher at OpenAI. He’s back with Neil Rathi and a new training concept: token-level data filtering. Filter the training data selectively, at the level of individual tokens, and you can shape the capability curve of the final model with precision.

They tested this with medical knowledge on large scale models. Strip certain tokens and the model “forgets” that domain and needs 7000x more compute to get back to baseline performance. And yet it doesn’t impact its capabilities elsewhere. The model gets a different shape and the effect is even more pronounced on larger models. It survives adversarial fine-tuning far better than current unlearning methods. Rathi and Radford suggest this opens the door to “a filtered version available to the general public and a fully capable model accessible via trusted release.”

Which is pretty much what we’re watching Anthropic do right now.

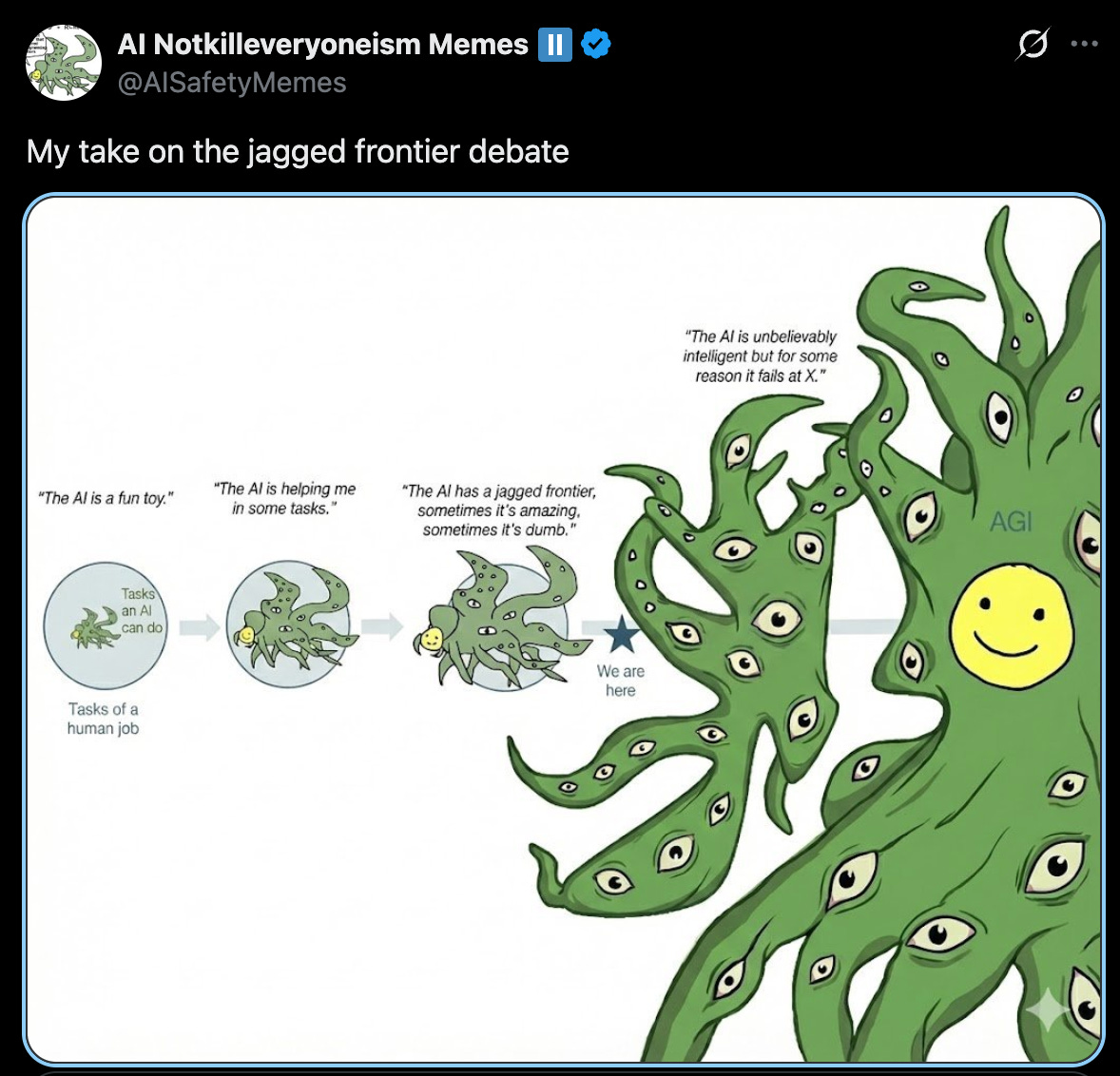

Ever since Karpathy coined the term “jagged intelligence”, everyone’s believed that the march towards AGI would sort of smooth these things out. More generalization would fill in the gaps and a big blob of compute meant the models would get good at absolutely everything and not make silly mistakes like miscounting “r”s in strawberry.

That’s not where we seem to be going. If it’s possible to keep the intelligence and make it specific, then pretty soon we won’t all be using the same models. Instead of a bunch of companies converging towards a generalized uber-mind, we’ll get many different minds, all with some high base level of intelligence, but all shaped differently, with different expertise.

We’re already seeing some of this with OpenAI releases, even before any impact from the Rathi-Radford paper. They’ve got Codex as a separate 5.x model for coding and now Rosalind for genetics too. The industry now has a knob they can turn to start figuring out which skills they want to focus on. It’s not far from there to: who do they want to give it to. And for how much.

Mythos is the first of a new kind of model release. Up until now, AI models have been democratic and cheap. There’s no gating beyond the relatively small cost of tokens: answer any intensely intellectual question for pennies. But Mythos is not for sale.

This is new for LLMs, but it’s historically more common. Powerful technologies are generally restricted by cost, physics, or laws. Nukes are restricted by regulation, treaties, and wildly difficult physics. Mythos is imitating this earlier playbook. It’s incredibly expensive to train, incredibly difficult to build, and now, by corporate policy, restricted to a small handful of entities.

But this is different from the historical analog. Every powerful technology we’ve ever regulated did only one thing. A nuclear weapon is good for one purpose. But an LLM can do practically anything and that’s made any idea of regulating them incoherent.

A future Mythos will not know much about nuclear or biological weapons. Whether that’s by architectural choice or force of law remains to be seen. Can you separate the knowledge of computer memory that allows for efficient C programming from the expertise that creates buffer overflows? How do you manage this sort of dual-use superhuman expertise? I don’t think anybody has a clue, which is why Anthropic is probably justified in holding back, even if you think they had other reasons.

A corporation with a powerful technology regulating themselves is an untenable position for any amount of time. The FDA doesn’t make drugs and the IAEA doesn’t run reactors. Right now, there’s no external body capable of this sort of thing. I’ve never been one for AI regulation, what with the ridiculousness of the EU or the politically-coded Colorado and New York laws. They’re ill-informed and far too simplistic. I can’t believe I’m saying this now but I’ve finally come around to thinking that the industry needs a regulatory counterweight with a sufficiently forward-looking mindset. It might come by force, as in Leopold’s prescient vision — a nationalization charter that limits the best uses to a gated elite.

Moving towards something more jagged might make regulation practical for the first time.

This all points to a new equilibrium. Whether by choice or by fiat, the best capabilities could end up where the public can’t reach them. Models will generalize base intelligence but get sharper on specific domains and lobotomized on others. The shape of the frontier changes and it’s no longer a big blob of intelligence. It’s a jagged thing.